IT Training

Transform Your Staff from Passive AI Users Into Human In The Loop (HITL) Operators

Equip your workforce with the skills to critically manage and audit AI - the most effective way to secure your company's data integrity. Let us help you deploy a customised corporate curriculum that turns automated speed into verifiable business value.

As organizations rapidly adopt generative AI, they face a silent operational crisis: teams are deploying highly polished, error-free outputs that hide deep logical flaws, exposing the business to systemic data liabilities and cognitive atrophy. To survive this shift, enterprises must move past the illusion of effortless expertise and transition their workforces away from blind, automated shortcuts.

True digital acceleration does not come from replacing human judgment, but from anchoring it at the centre of the technology stack. By instituting a rigorous, cross-departmental learning path, businesses can establish the critical guardrails and "Centaur" workflows needed to transform their staff from passive, vulnerable AI users into highly vigilant, strategically aligned Human-in-the-Loop (HITL) operators who can confidently audit, verify, and drive high-trust corporate growth.

The business journey into AI is unique to every client in terms of how they envisage AI enhancing their business and how they wish AI to be adopted and implemented across the organisation.

With that in mind we find that a lot of our more strategic AI training programs are tailored to a specific organisation.

That said there is a common pathway that we look to follow when working with a client to create an AI strategy and an AI learning pathway.

Our AI Steering Wheel Series of four workshops pave the way for successful AI adoption ensuring high importance is given to data governance and human oversight wherever AI is put to work.

This article highlights the key challenges to organisations in today's world of AI driven content and development and identifies the core learning and development requirements to upskill your teams to implement AI solutions in the right way for your organisation.

AI Steering Wheel Learning Path

Our AI Steering Wheel series of workshops is as it sounds. All you need to know to steer your AI experience in the right direction.

To get you started we have a half day workshop, How To Build a Safe AI Usage Framework, that will take you through the key consideration and actions required for a successful experience with AI within your organisation. After attending this workshop you will be ready to work through designing, developing and delivering you four stage AI Steering Wheel learning pathway.

Workshop 1: Solving the "Blind Trust & Broken Logic" Problem

This workshop focuses on AI Literacy and shattering the illusion that a highly polished AI output automatically translates to accurate, verified business logic.

Generative AI models are designed to sound confident and look professional, regardless of whether their calculations are correct. "Shattering the illusion" is the deliberate process of breaking a team's blind trust in these tools by showing them exactly how easily a flawless-looking report can hide a catastrophic structural error.

There are two potential illusions that arise from using generative AI tools to build solutions:

The Presentation Illusion

AI generates beautifully structured SQL code, a perfectly formatted Python script, or an interactive chart with professional colour schemes

The final presentation looks clean and contains zero technical syntax bugs, the human brain automatically assumes the underlying mathematical calculations and business logic must be correct

The Ease Illusion

A user types a short prompt, and a complex data asset appears five seconds later

The execution was effortless, so the user feels a false sense of personal pride and success

They believe they have successfully engineered a solution, bypassing the hard, manual work of validation and cross-checking

In this workshop we look at an AI-generated report that looks entirely correct on the surface, but has an intentional, deeply buried logical error (such as the double-counting or data leakage scenarios). The objective is to help delegates to realise a critical truth: AI is an accelerator of tasks, not a replacement for human judgment and verification.

Key objectives for this workshop will be:

Fixing the High Cost of Unverified AI Data

Preventing Flawless Formatting from Masking Flawed Metrics

Breaking the Cycle of Blind Trust in AI Outputs

Workshop 2: Solving the "Rogue Pipeline & Operational Chaos"

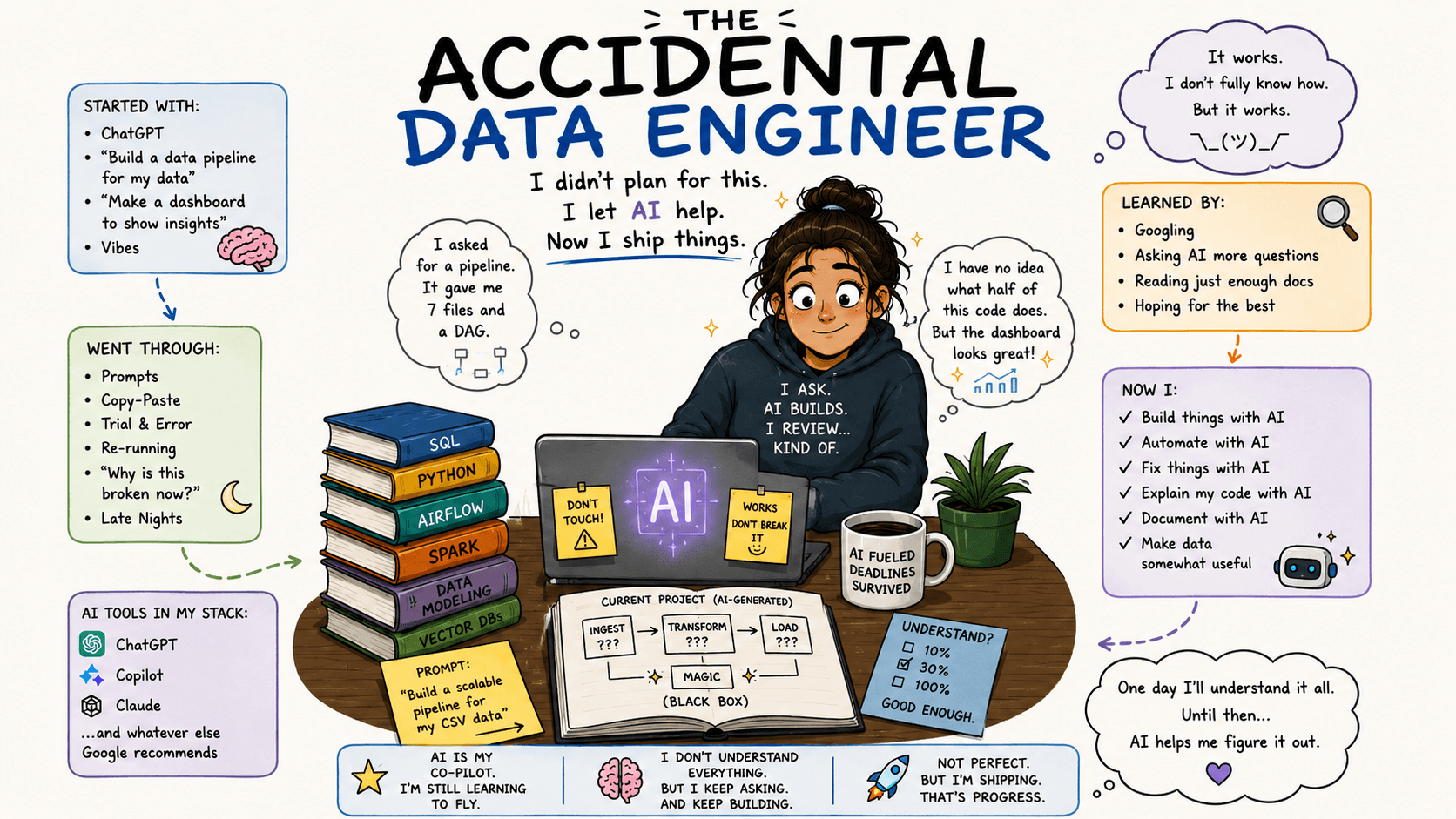

This workshop focuses on designing the HITL Workflow. Historically, data was locked in a central repository, tightly guarded by a technical data team. Today, generative AI has handed every business user a digital sledgehammer to bypass those gates. This shift has created an operational environment known as "Shadow Data Engineering" and another term to describe such a business user is the "Accidental Data Engineer".

There are three major threats when it comes to Shadow Data Engineering and Accidental Data Engineers:

The Proliferation of "Shadow Truths"

In the past, if a marketing manager and a finance manager disagreed on quarterly revenue metrics, they had to go to the central data team to resolve the discrepancy

Armed with AI, both managers can now independently query raw data tables, apply their own prompt-engineered definitions, and build separate, competing dashboards

Executive meetings grind to a halt because different departments present conflicting "truths" and nobody can agree on which data is real because the underlying pipelines were generated in siloed AI chats with zero standardization.

The Infrastructure Traffic Jam

Professional data engineers write code defensively and use incremental loading, indexing, and caching to ensure their data queries don't strain the company's live servers

An accidental data engineer using AI doesn't know what a server load or a table join optimisation is and when they ask AI for a report, AI generates code that overloads the database

Dozens of unoptimised, rogue AI scripts run simultaneously across the company and lock tables, drain computing bandwidth, skyrocketing cloud service subscription bills, and can even crash customer-facing production applications

The "Orphaned Code" Liability

Software engineering relies heavily on documentation, version control such as GIT, and peer reviews to ensure that if an engineer leaves the company, someone else can maintain their work

Business users build complex data pipelines inside isolated AI chat histories. They do not document the code nor commit it to a central repository nor explain the logic to their peers

When that employee leaves the company or the pipeline inevitably breaks due to a routine database update, the remaining team inherits a completely undocumented "black box"

The data team must drop their strategic roadmap to perform forensic audits on tangled AI code they didn't write

To avoid rogue pipelines you need to establish structural boundaries that promote innovation but protect the company's infrastructure.

The key objectives for this workshop will be:

Building Human Accountability into High-Speed AI Workflows

Insulating Core Infrastructure from Accidental Data Engineers

Where to Automate and Where to Enforce Human Judgement

Workshop 3: Solving the "Hallucination & Guesswork" Problem

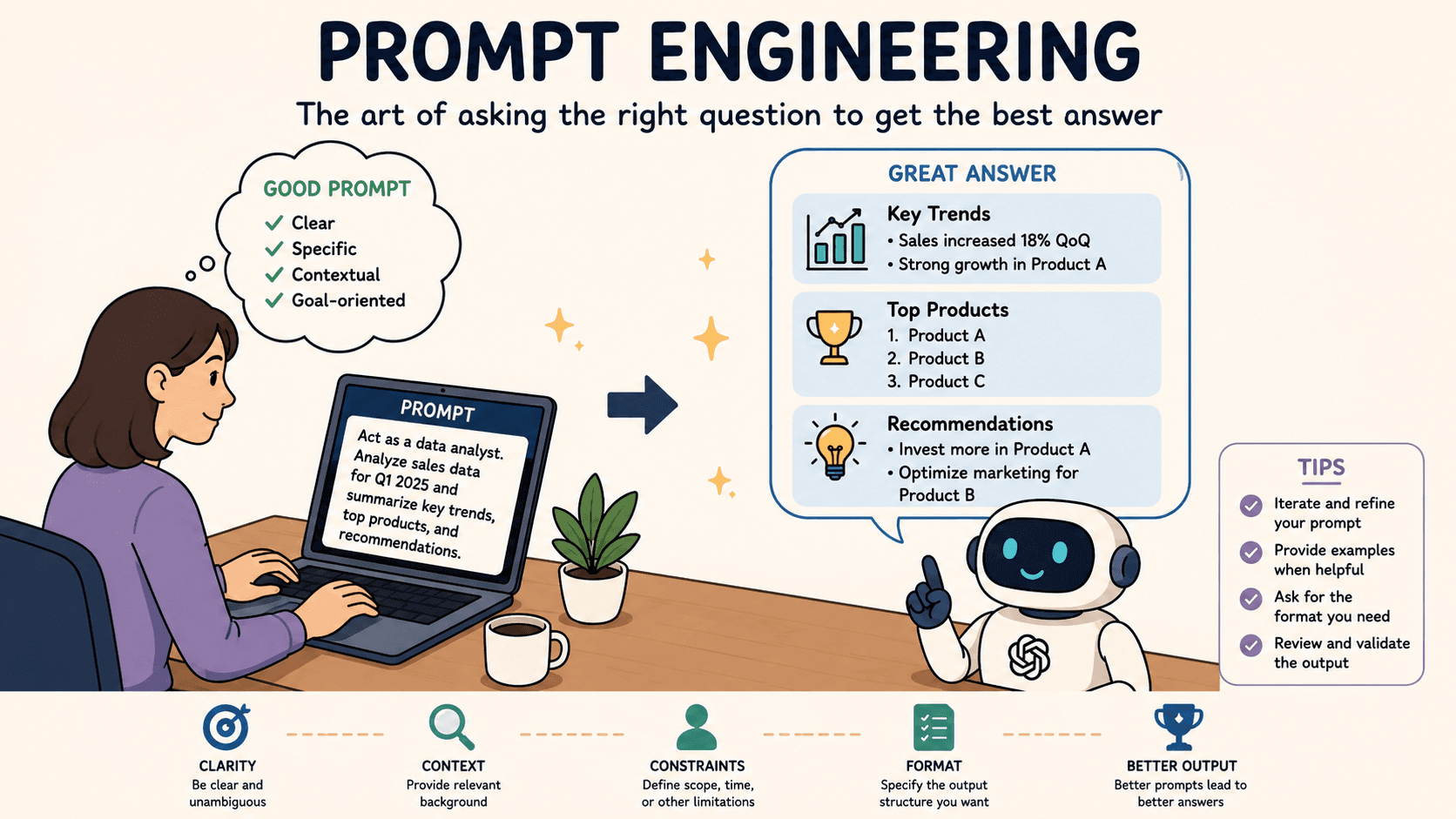

This workshop focuses on the core structural flaw of Large Language Models (LLMs). The nature of coding has changed with the advent of generative AI.

Before generative AI writing code was a deliberate process of logical construction, but today AI has turned coding into an exercise in probabilistic guessing. "Probabilistic guessing" describes the core mathematical mechanism behind how Generative AI works. Large Language Models (LLMs) do not possess logic, reasoning, or an understanding of computer science. They are merely sophisticated statistical prediction engines. When an AI tool writes a data pipeline, a SQL query, or a Python script, it is simply guessing the next most likely word (or code character) based on patterns it found in its open-source training data.

Let's compare the human coding approach to the AI coding approach:

The Human Process (Deterministic Logic) | The AI Process (Probabilistic Guessing) |

Focuses on absolute cause and effect. | Focuses on statistical likelihood. |

Understands the meaning behind database tables and business metrics. | Identifies patterns of text that frequently appear near each other in training data. |

Executes code based on rigid, unyielding mathematical rules. | Selects the next word based on a percentage probability score. |

When you provide a prompt to an AI tool to write a query for you, it isn't thinking about your business or your data structures. It is asking itself: "Based on millions of repositories I have indexed, what is the most common sequence of characters that follows the phrase SELECT Product_Code?"

Data systems are deterministic and they require absolute, 100% precision. A misplaced comma, an incorrect table join, or a logical variation will corrupt an entire financial report.

Relying solely on an AI tool built on probability to manage a system built on precision creates three major issues:

The "Almost Right" Mirage

Because AI is excellent at matching text patterns, the code it generates will look 95% perfect

It will run without errors, but the remaining 5% of probabilistic guessing might inadvertently flip a filter, skip a key piece of logic, or mix up units of measure such as currency conversions

The Randomness Factor

LLMs use a setting called "temperature" to control creativity. If you run the exact same prompt three times, a probabilistic engine can give you three slightly different versions of the code

A business cannot scale a data strategy on code that changes based on a machine's creative mood

Hallucinating the Missing Links

AI does not know your exact database schema and it will not stop and ask for clarification

AI will use probability to guess what your database tables should be named based on industry averages, confidently inventing fictional infrastructure and objects to complete the text pattern

Prompt Engineering Data Strategies fail when they are not handled by subject matter experts.

An Accidental Engineer cannot identify when a statistical guess is miles away from technical reality.

The key objectives for this workshop are:

Anchoring AI Outputs to Your Exact Business Realities

Eliminating Guesswork with Few-Shot Prompting

Mandating Defensive Guardrails in AI-Generated Code

Workshop 4: Solving the "Data Leakage & Cognitive Decline" Problem

This workshop focuses on the loss of corporate intellectual property and the erosion of human intellect.

When an organisation fails to govern how its staff interfaces with generative AI, it creates a dual crisis.

Externally, proprietary information leaks into public models

Internally, the workforce's critical thinking skills rapidly decay

There are three core threats here:

The Data Leakage Crisis

Non-technical users rushing to solve immediate problems often paste sensitive materials directly into public AI chat bars to get fast answers

In their quest to get help and do things faster employees regularly upload unmasked customer CSV databases, private API encryption keys, or confidential documents such as draft mergers and acquisitions, or proprietary database source code

Many public AI models use input data to train future iterations and this means a competitor prompting the same public AI tool could inadvertently surface your company’s internal metrics, strategic plans, or proprietary source code

This creates catastrophic liabilities under data protection regulations like the GDPR

The Cognitive Decline Crisis

Complex cognitive work requires resistance to maintain its strength, much like a physical muscle.

When employees use AI to instantly bypass the struggle of debugging code, analysing messy spreadsheets, or drafting complex logic, their brains stop processing the underlying mechanics of the problem

Over a six-to-twelve-month period of over-reliance, teams suffer from automation bias—a psychological state where humans stop checking for errors because they assume the automated system is infallible.

When a critical data architecture breaks down or a complex system failure occurs, the de-skilled workforce lacks the baseline mental maps to diagnose or fix the problem

The Dependency Trap

By outsourcing core thinking to third-party AI algorithms, your company has subtly shifted its internal knowledge away from its employee base and into an external, subscription-based "black box"

The company becomes highly vulnerable.

If the AI vendor/provider changes its pricing costs could spiral

If the AI vendor/provider updates its model logic old prompts may fail

If the AI vendor/provider suffers an extended cloud outage your company's daily operations grind to a complete halt and no single human left on your staff knows how to perform the work manually

Preventing Data Leakage and Cognitive Decline is the number one goal of Human In The Loop (HITL) strategy. Use AI to push and extend human capabilities, but do not allow AI shortcuts to make your staff obsolete.

The key objectives for this workshop are:

Eradicating Compliance and Privacy Risks in Public Prompts

Practical Frameworks to Verify Data You Didn't Personally Write

Protecting Your Team’s Critical Problem-Solving Skills

Summary

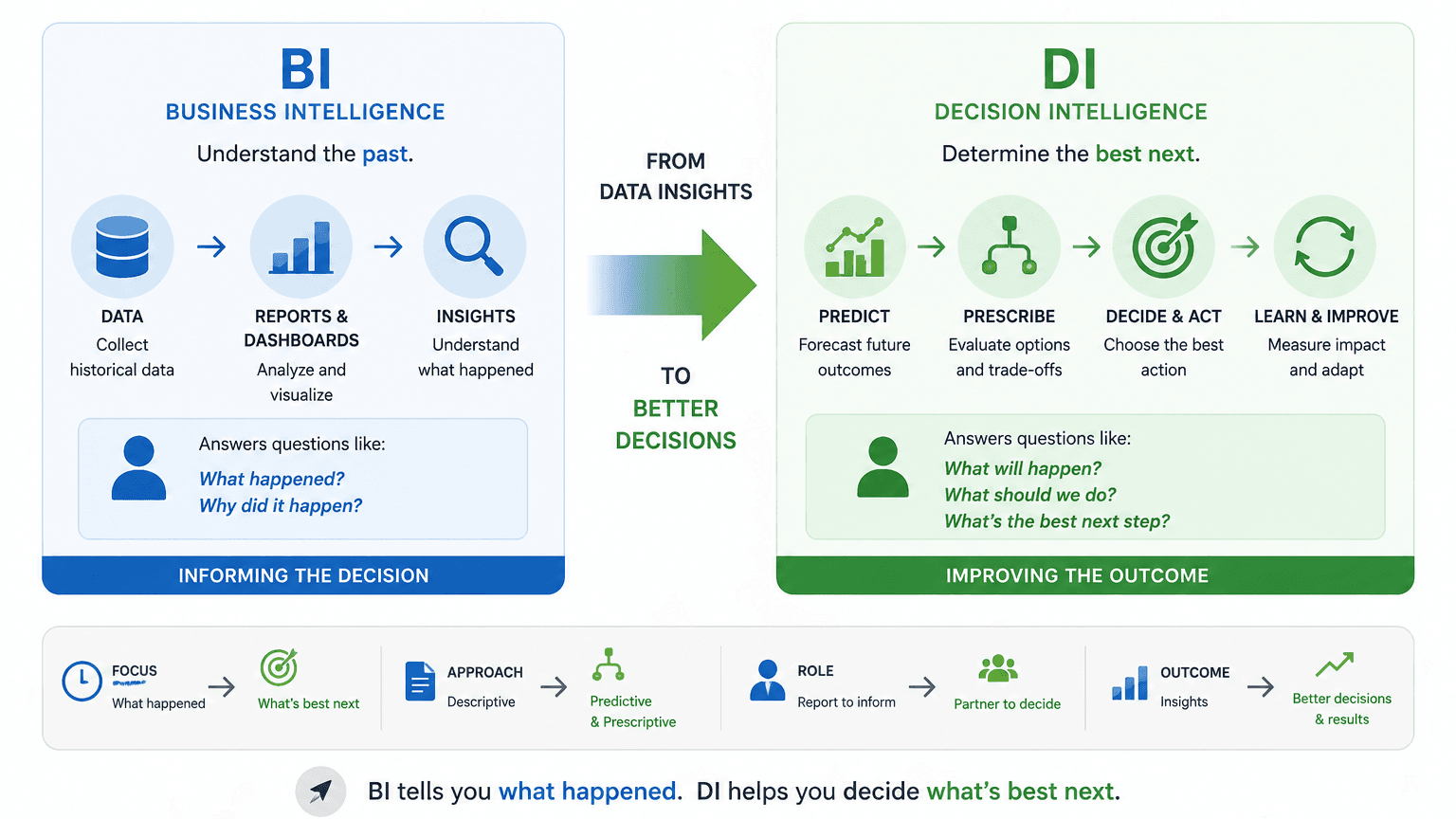

PTR provides a structured approach to AI adoption through a combination of training, consultancy, and strategic workshops designed to help organisations implement AI safely and effectively.

Our offering spans foundational AI education, Microsoft 365 Copilot training, and advanced data science capabilities, all underpinned by a strong focus on governance, data quality, and business alignment.

Central to our approach is the “AI Steering Wheel Series,” which addresses key risks such as blind trust in AI outputs, rogue data pipelines, hallucinations in AI-generated results, and data leakage, while promoting Human-in-the-Loop (HITL) practices to maintain oversight and accountability.

The overall aim is to transform employees from passive AI users into critical, skilled operators who can validate outputs, protect organisational integrity, and ensure AI is used as a controlled accelerator rather than an unchecked dependency.

Share This Post

Mandy Doward

Managing Director

PTR’s owner and Managing Director is a Microsoft certified Business Intelligence (BI) Consultant, with over 35 years of experience working with data analytics and BI.

Frequently Asked Questions

Couldn’t find the answer you were looking for? Feel free to reach out to us! Our team of experts is here to help.

Contact Us